When Scammers Clone Voices: What FPL Fraud Means for VO Pros

AI voice scams impersonating FPL reps show why verified human VO talent and consent-based licensing matter more than ever for working pros.

AI Voice Cloning Hits Utility Customers in Florida

Gulf Coast News reported that scammers are using AI-cloned voices to impersonate Florida Power & Light representatives in phone calls to customers. The callers sound convincing enough to pressure people into handing over payment information or personal details, often under threat of service shutoff. You can read the original report from Gulf Coast News and Weather for the full consumer warning.

For working voice actors, this story lands differently than it does for the general public. We know exactly how voice cloning works, how little audio it takes to train a convincing model, and how quickly the output crosses into territory that harms real people. The FPL impersonation calls are a downstream symptom of a technology that has outpaced its own guardrails.

The Fraud Angle Is Changing the Conversation

Most industry discussion around AI voice has focused on job displacement: e-learning work drying up, explainer videos going synthetic, indie game studios trying TTS before booking a human. That conversation is ongoing and important. The impersonation scam angle adds something new. It moves AI voice from a business ethics debate into a consumer protection one.

When a retiree in Fort Myers loses money because a cloned voice threatened to cut their power, the public starts associating synthetic voice with fraud. That reframing matters for anyone whose career depends on clients trusting the voice on the other end of a contract.

What Regulators Are Starting to Notice

The FCC declared AI-generated robocalls illegal under the Telephone Consumer Protection Act in early 2024, and state attorneys general have been building cases against specific bad actors since. Utility impersonation scams like the FPL one give enforcement agencies concrete, sympathetic victims. That tends to accelerate regulatory response. For voice professionals, clearer rules on synthetic voice use are overdue and will likely benefit the legitimate side of the industry.

Need a commercial voice for your next project?

RealVOTalent is a marketplace of verified human voice actors. Play demos, compare rates, and hire in minutes.

Featured Commercial Talent

View all →

Hi! I'm a professional voiceover artist based in Orlando, Florida. I love being behind the microphone and bringing stories, scripts, and ideas to life. Whether it's a high-energy television commercial, a warm and conversational corporate explainer, a detailed eLearning module, or a long-form audiobook narration, I approach every project with the same dedication and care. Throughout my career, I've had the privilege of working with companies like Alibaba, Google, and Walmart to voice their productions and move audiences to action. I've also spent years coaching and mentoring voice actors at every stage of their careers, which has given me a deep understanding of the craft and a constant drive to refine my own performance. When it's time to create content for your business, you can trust me to deliver broadcast-quality audio on a fast turnaround. I'm easy to work with, take direction well, and genuinely care about getting every read right. That way, you can get back to doing what you do best. Let's get to work.

Sara is a full time American voice actor who brings energy, charisma, and playfulness to each performance. With a strong foundation in creative storytelling and clear communication, she shines in character-driven work, where she can explore bold choices, distinct personalities, and expressive range. That same sense of play also informs her commercial and narration reads, giving them a fresh, authentic feel. Her voice carries a cool, charismatic blend of Cameron Diaz and Drew Barrymore, with hints of Miley Cyrus edge and Aubrey Plaza charm. Naturally playful and emotionally agile, Sara’s sound is engaging and adaptable. Known for her collaborative, low-stress sessions and taking direction like a beast, she makes the process smooth, fun, and creatively rewarding. Performing in a custom-built, professionally sound-treated studio, Sara delivers broadcast-quality audio with quick delivery. Accent work includes general American, American (south), British RP, French, Italian, and German. Based in Central Florida with her partner and dog, Sara spends her free time playing and streaming video games, painting, and spending as much time in a hammock as possible.

An American male voice talent based in the Nashville, Tennessee area -- not only is he a trained voice over talent, but he also has a wealth of real-world experience to add authenticity, from University teaching to military leadership. His home recording studio allows him to provide high quality audio with a quick turnaround for time-sensitive projects. Understanding that successful working relationships are just that-- relationships-- he is dedicated to providing clients with clear and constant communication, reliability, and above all else, providing the voice that makes your project stand out.

Why Verified Human Talent Matters More Now

Clients booking voiceover work are going to become more sensitive to questions of consent, licensing, and provenance. A brand that pulls a cheap synthetic voice from an unvetted platform is taking on reputational risk it did not have three years ago. If that voice turns out to be cloned from a real actor without permission, or worse, if it sounds close enough to a known person to invite a lawsuit, the savings disappear fast.

Human voice talent offers something no cloned model can replicate on a legal basis:

- Documented consent. A signed contract with a living professional establishes exactly what the voice can be used for, where, and for how long.

- Performance direction. A real actor adjusts in real time to feedback, brand tone, and script nuance in ways synthetic pipelines handle poorly.

- Accountability. If a spot needs a revision or a tag, the talent is reachable. A cloned voice has no one on the other end.

- Brand safety. No client wants their commercial voice confused with the one calling grandparents about their electric bill.

How Platforms Like RealVOTalent Fit In

Marketplaces that verify their talent and handle licensing transparently become more valuable as the synthetic voice market gets murkier. On RealVOTalent, every voice belongs to an identifiable professional who signed up under their own name, with their own demos, under their own terms. That is a simple thing, but it is increasingly the baseline clients should demand from any voice source.

The RealVOTalent AI voice sentiment tracker gives a broader view of how buyers and talent are responding to synthetic voice across the industry. Stories like the FPL scam tend to shift that sentiment noticeably.

Practical Steps for Working VO Pros

A few things worth thinking about while this news cycle plays out:

- Check your contracts. Make sure any AI rights clauses are explicit and time-limited. Broad "use in any medium including synthetic reproduction" language is worth negotiating out.

- Watermark your demos where you can. Some demo hosts now offer subtle audio fingerprinting. It will not stop a determined cloner, but it creates evidence.

- Talk to your clients about provenance. Many buyers genuinely do not know which platforms verify talent and which do not. A short conversation can shift a booking decision.

- Report impersonation. If you find your voice cloned without permission, the FTC and your state AG both take complaints, and platform takedown requests are getting faster.

The FPL scam is a reminder that the same technology being sold to advertisers as a cost-saving tool is already being used to hurt people. The voiceover industry has a stake in how that gets regulated, and verified human talent is going to look more valuable the longer these stories keep coming.

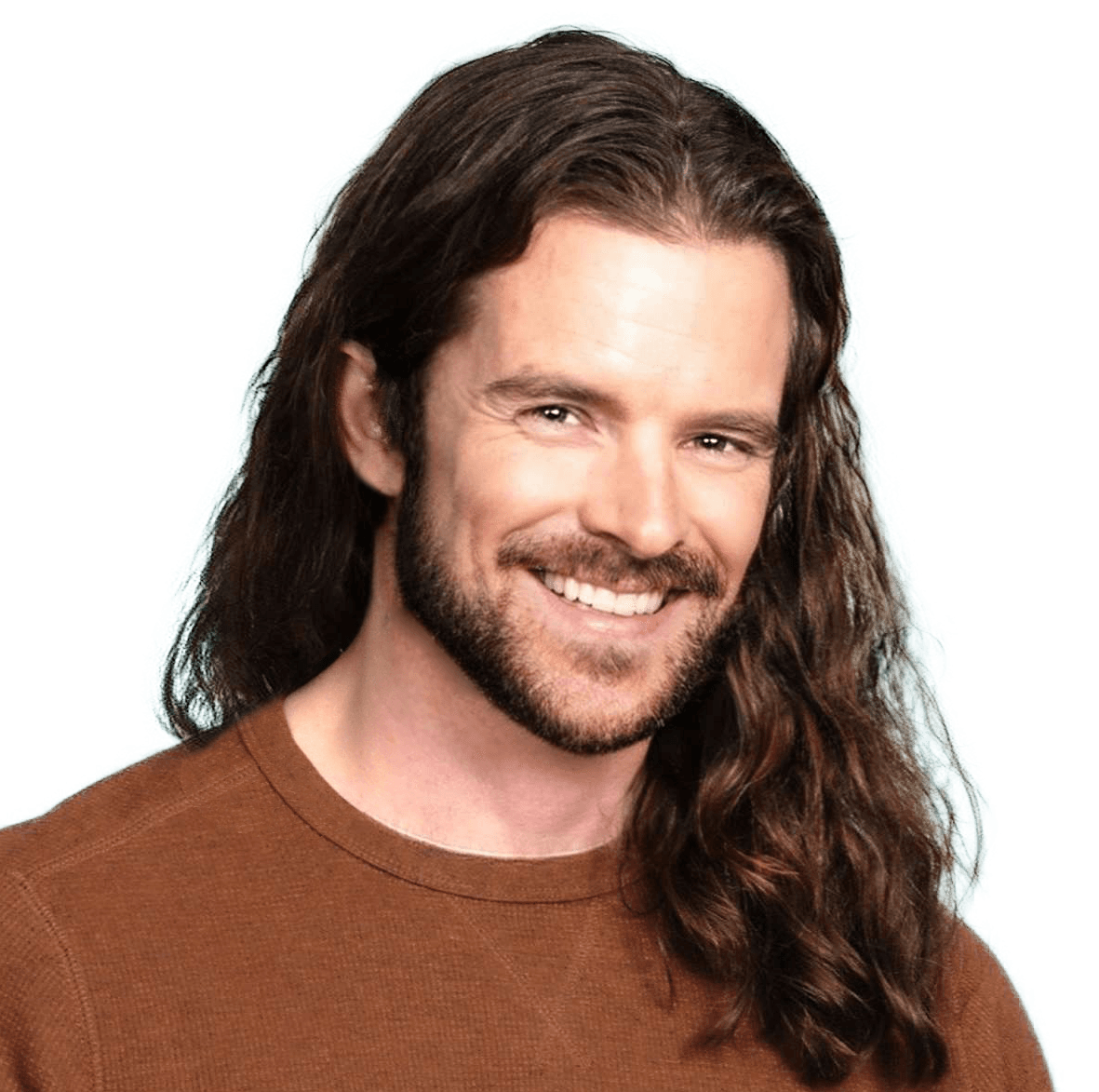

Written by

Trevor O'Hare

Founder, RealVOTalent

Trevor is a professional voice actor who has worked in audio for over two decades and been in the voiceover industry since 2019, completing thousands of projects for Fortune 500 companies and small businesses alike. He also coaches voice talent at VOTrainer.com.

Get voiceover industry tips & insights

Join our newsletter. No spam, unsubscribe anytime.